HPC/Submitting and Managing Jobs/Example Job Script: Difference between revisions

| (9 intermediate revisions by the same user not shown) | |||

| Line 98: | Line 98: | ||

programname | programname | ||

</syntaxhighlight> | </syntaxhighlight> | ||

== Advanced Job scripts == | |||

=== Multithreading using OpenMP === | |||

See [[HPC/Submitting and Managing Jobs/Advanced node selection#Multithreading using OpenMP]]. | |||

=== GPU nodes === | === GPU nodes === | ||

As of late 2012, Carbon has 28 nodes with 1 NVIDIA C2075 GPU each. To specifically request GPU nodes, add <code>:gpus=1</code> to the <code>nodes</code> request: | As of late 2012, Carbon has 28 nodes with 1 NVIDIA C2075 GPU each. To specifically request GPU nodes, add <code>:gpus=1</code> to the <code>nodes</code> request. At the moment this is synonymous with but preferable to specifying <code>…:gen3</code>. | ||

#PBS -l nodes=''N'':ppn=''PPN''''':gpus=1''' | #PBS -l nodes=''N'':ppn=''PPN''''':gpus=1''' | ||

* The <code>gpus=…</code> modifier refers to GPUs ''per node'' and currently must always be 1. | * The <code>gpus=…</code> modifier refers to GPUs ''per node'' and currently must always be 1. | ||

* GPU support depends on the application, in particular if several MPI processes or OpenMP threads can share a GPU. Test and optimize the <code>''N''</code> and <code>''PPN''</code> parameters for your situation. Start with <code>nodes=1:ppn=4</code>. | * GPU support depends on the application, in particular if several MPI processes or OpenMP threads can share a GPU. Test and optimize the <code>''N''</code> and <code>''PPN''</code> parameters for your situation. Start with <code>nodes=1:ppn=4</code>. | ||

| Line 119: | Line 121: | ||

Then, a job script in Python would typically begin like this: | Then, a job script in Python would typically begin like this: | ||

<source lang="python3"> | |||

#!/usr/bin/env python3 | |||

#PBS -l nodes=N:ppn=PPN | |||

#PBS -l walltime=hh:mm:ss | |||

#PBS -N job_name | |||

#PBS -A account | |||

#PBS -o filename | |||

#PBS -j oe | |||

#PBS -m ea | |||

import os | |||

if "PBS_O_WORKDIR" in os.environ: | |||

os.chdir(os.environ["PBS_O_WORKDIR"]) | |||

'''Important:''' The job script only ever runs in serial, on one core. Any parallelization must be initiated by | |||

# body of script here … | |||

</source> | |||

'''Important:''' The job script ''interpreter'' only ever runs in serial, on one core. | |||

Any parallelization must be initiated by one of the following mechanisms: | |||

* Calling a child application that runs in parallel (e.g. VASP). Such a child script or application either needs to be aware of or be told to use <code>$PBS_NODEFILE</code>. When the child application finishes, the calling script will collect results and typically iterates until some criterion is met. This calling model is perfectly reasonable as long as the "controlling" application doesn't dominate the time required to shepherd its child calculations. | |||

* OpenMP | |||

* [https://docs.python.org/3/library/multiprocessing.html Python <code>multiprocessing</code>] | |||

* MPI (e.g. [https://docs.python.org/3/library/multiprocessing.html Mpi4Py]) | |||

The latter is not to be confused with using mpirun to start several Python interpreters in parallel, which is how [[HPC/Modules/gpaw | GPAW]] and [[HPC/Modules/atk | ATK]] operate: | |||

#!'''/bin/bash''' | #!'''/bin/bash''' | ||

… | … | ||

| Line 163: | Line 175: | ||

=== Timed Allocations === | === Timed Allocations === | ||

See [[HPC/FAQ#What's my account balance?]]. | |||

== Advanced node selection == | == Advanced node selection == | ||

Latest revision as of 22:09, October 8, 2019

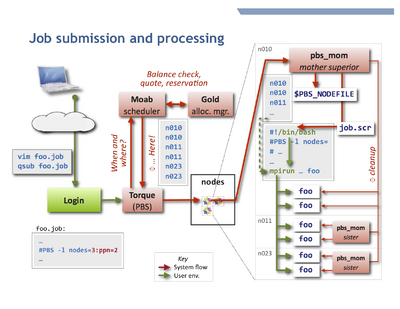

Introduction

A Torque job script is usually a shell script that begins with PBS directives. Directives are comment lines to the shell but are interpreted by Torque, and take the form

#PBS qsub_options

The job script is read by the qsub job submission program, which interpretes the directives and accordingly places the job in the queue. Review the qsub man page to learn about the options accepted and also the environment variables provided to the job script later at execution.

- Note

- Place directives only at the beginning of the job script. Torque ignores directives after the first executable statement in the script. Empty lines are allowed, but not recommended. Best practice is to have a single block of directives at the beginning of the file.

- Advanced usage

- The job script may be written in any scripting language, such as Perl or Python. The interpreter is specified in the first script line in Unix hash-bang syntax

#!/usr/bin/perl, or using the qsub -S path_list option. - The default directive token

#PBScan be changed or unset entirely with the qsub -C option; see qsub, sec. Extended Description.

Application-specific job scripts

For most scalar and MPI-based parallel jobs on Carbon the scripts in the next section will be appropriate.

Some applications however require customizations,

typically copying $TMPDIR to an app-specific variable,

or calling MPI through app-specifc wrapper scripts.

Such application-specific custom scripts are located at the root directory of the application under /opt/soft,

and can typically be reached as:

$APPNAME_HOME/APPNAME.job

or

$APPNAME_HOME/sample.job

where APPNAME is the module name all UPPERCASED and with "-" (minus) characters replaced by "_" (underscore).

To find variables of this form:

env | grep _HOME

Generic job scripts

Here are a few example jobs for the most common tasks. Note how the PBS directives are mostly independent from the type of job, except for the node specification.

OpenMPI, InfiniBand

This is the default user environment. The openmpi and icc, ifort, mkl modules are preloaded in the system's shell startup files.

The InfiniBand fast interconnect is selected in the openmpi module by means of the environment variable $OMPI_MCA_btl.

#!/bin/bash

#

# Basics: Number of nodes, processors per node (ppn), and walltime (hhh:mm:ss)

#PBS -l nodes=5:ppn=8

#PBS -l walltime=0:10:00

#PBS -N job_name

#PBS -A account

#

# File names for stdout and stderr. If not set here, the defaults

# are <JOBNAME>.o<JOBNUM> and <JOBNAME>.e<JOBNUM>

#PBS -o job.out

#PBS -e job.err

#

# Send mail at begin, end, abort, or never (b, e, a, n). Default is "a".

#PBS -m ea

# change into the directory where qsub will be executed

cd $PBS_O_WORKDIR

# start MPI job over default interconnect; count allocated cores on the fly.

mpirun -machinefile $PBS_NODEFILE -np $PBS_NP \

programname

- Updated 2012-07: Beginning with Torque-3.0,

$PBS_NPcan be used instead of the former construct$(wc -l < $PBS_NODEFILE).

- If your program reads from files or takes options and/or arguments, use and adjust one of the following forms:

mpirun -machinefile $PBS_NODEFILE -np $PBS_NP \

programname < run.in

mpirun -machinefile $PBS_NODEFILE -np $PBS_NP \

programname -options arguments < run.in

mpirun -machinefile $PBS_NODEFILE -np $PBS_NP \

programname < run.in > run.out 2> run.err

- In the last form, anything after

programnameis optional. If you use specific redirections for stdout or stderr as shown (>, 2>), the job-global filesjob.out, job.errdeclared earlier will remain empty or only contain output from your shell startup files (which should really be silent), and the rest of your job script.

OpenMPI, Ethernet

To select ethernet transport, such as for embarrasingly parallel jobs, specify an -mca option:

mpirun -machinefile $PBS_NODEFILE -np $PBS_NP \

-mca btl self,tcp \

programname

Intel-MPI

Under Intel-MPI the job script will be:

#!/bin/bash

#PBS ... (same as above)

cd $PBS_O_WORKDIR

mpiexec.hydra -machinefile $PBS_NODEFILE -np $PBS_NP \

programname

Advanced Job scripts

Multithreading using OpenMP

See HPC/Submitting and Managing Jobs/Advanced node selection#Multithreading using OpenMP.

GPU nodes

As of late 2012, Carbon has 28 nodes with 1 NVIDIA C2075 GPU each. To specifically request GPU nodes, add :gpus=1 to the nodes request. At the moment this is synonymous with but preferable to specifying …:gen3.

#PBS -l nodes=N:ppn=PPN:gpus=1

- The

gpus=…modifier refers to GPUs per node and currently must always be 1. - GPU support depends on the application, in particular if several MPI processes or OpenMP threads can share a GPU. Test and optimize the

NandPPNparameters for your situation. Start withnodes=1:ppn=4. - Each GPU node has 12 regular CPU cores; if you submit jobs with

:ppn < 12and:gpus=1the node may be shared with pure CPU jobs. It is to be tested if and how much interference this causes for either job. See Advanced node selection to reserve entire nodes while controllingppnfor MPI or OpenMP.

Job scripts in other scripting languages

The job scripts shown above are written in bash, a Unix command shell language. Actually, qsub accepts scripts in pretty much any scripting language. The only pieces that Torque reads are the Torque directives, up to the first script command, i.e., a line which is (a) not empty, (b) not a PBS directive, and (c) not a "#"-style comment. The script interpreter is chosen by the Linux kernel from the first line of the script.

As with any job, the script execution during the job begins in the home directory, so one of the first commands in the script should be the equivalent of cd $PBS_O_WORKDIR.

If you wish to use test your script interactively, you must avoid accessing the PBS_O_WORKDIR variable.

(This problem - and a solution - exists for bash-based job scripts as well but usually having to use mpirun and to name the machinefile makes the expected environment more obvious.)

Then, a job script in Python would typically begin like this:

#!/usr/bin/env python3

#PBS -l nodes=N:ppn=PPN

#PBS -l walltime=hh:mm:ss

#PBS -N job_name

#PBS -A account

#PBS -o filename

#PBS -j oe

#PBS -m ea

import os

if "PBS_O_WORKDIR" in os.environ:

os.chdir(os.environ["PBS_O_WORKDIR"])

# body of script here …

Important: The job script interpreter only ever runs in serial, on one core. Any parallelization must be initiated by one of the following mechanisms:

- Calling a child application that runs in parallel (e.g. VASP). Such a child script or application either needs to be aware of or be told to use

$PBS_NODEFILE. When the child application finishes, the calling script will collect results and typically iterates until some criterion is met. This calling model is perfectly reasonable as long as the "controlling" application doesn't dominate the time required to shepherd its child calculations. - OpenMP

- Python

multiprocessing - MPI (e.g. Mpi4Py)

The latter is not to be confused with using mpirun to start several Python interpreters in parallel, which is how GPAW and ATK operate:

#!/bin/bash … mpirun -machinefile $PBS_NODEFILE -np $PBS_NP \ gpaw-python script.py

#!/bin/bash … mpiexec.hydra -machinefile $PBS_NODEFILE -np $PBS_NP \ atkpython script.py

The account parameter

The parameter for option -A account can take the following forms:

cnm23456- for most jobs, containing your 5-digit proposal number.

user- (the actual string "user", not your user name) for a limited personal startup allocation.

staff- for discretionary access by CNM staff.

You can check your account balance in hours as follows:

mybalance -h

gbalance -u $USER -h

- The relevant column is

Available, accounting for amounts reserved by current jobs and credits.

Timed Allocations

See HPC/FAQ#What's my account balance?.

Advanced node selection

You can refine the node selection (normally done via the PBS resource -l nodes=…) to

finely control your node and core allocations. You may need to do so for the following reasons:

- select specific node hardware generations,

- permit shared vs. exclusive node access,

- vary PPN across the nodes of a job,

- accommodate multithreading (OpenMP).

See HPC/Submitting Jobs/Advanced node selection for these topics.