HPC/Submitting and Managing Jobs

Introduction

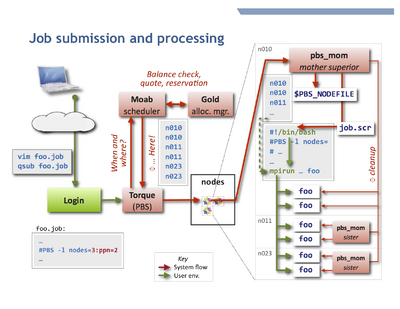

To accept and run jobs, Carbon uses the Torque Resource Manager, which is a variant of PBS. A separate software component called a scheduler chooses the nodes and the start time for jobs according to the job parameters, resource availability, and configuration. Carbon uses the Moab Workload Manager as scheduler. The resources used by each job (primarily the CPU time) are tracked and managed by the Gold Allocation Manager.

Job processing

A job consists of a job script and applications called therein. The figure at the top shows schematically how a typical job is processed.

Unless otherwise told, Torque will execute the job script on your behalf on the first node allocated by Moab,

in your $HOME directory, and typically using your login shell. It is the script's responsibility to:

- Change to a suitable working directory.

- Recognize and use the other nodes of the job.

- This is generally done by directing an MPI program to start on the nodes listed in the job's

$PBS_NODEFILE. - A job is perfectly free to run more than one program, such as several MPI programs in sequence.

- This is generally done by directing an MPI program to start on the nodes listed in the job's

- Set or propagate environment variables as needed to other nodes.

Environment variables

The handling of environment variables across the cores and nodes that a job uses varies with the capabilities and configuration choices of the various MPI implementations.

- Variables usually are propagated core-to-core for all processes on the first node.

- Variables usually are not propagated node-to-node.

- Consequences

- You must configure modules and $PATH in your

$HOME/.bashrcfile to help mpirun and other mechanisms locate your programs on each compute node. Otherwise mpirun will fail as soon as more than one node is involved, either by not finding the binary itself or some of its required shared libraries, or more insidiously, using different shared libraries. - Both the variable

$PBS_NODEFILEand the file it points to are only available on the first node. - To propagate (export) environment variables, use mpirun/mpiexec. However, there is no standard or quasi-standard across MPI flavors.

- OpenMPI's mpirun selectively exports some variables and supports the

-xflag to export additional variables. - Intel's mpiexec/mpiexec.hydra offer

-envlist NAME,NAME,...instead. See Sec. 2.4 in the Reference Manual at [1].

- OpenMPI's mpirun selectively exports some variables and supports the

See also: directory layout.

Submitting and managing jobs

Job Script

A job is described to Torque by a job script, which in most cases is a shell script containing directives for Torque.

Submission

To submit a job, use Torque's qsub command on a login node.

qsub [-A accountname] [options] jobfile

To review qsub operation and options, consult the following:

man qsub qsub --help

- Advanced usage

- You can submit follow-up jobs from within a parent job script while it runs on a compute node, but it may be better to leverage job dependencies.

- Requesting Resources

- Alternatively, use Moab's msub command.

Queues

See Queues and Policies.

Checking job status

Query your jobs using commands similar to those on other HPC clusters. You can use either Torque commands or Moab commands. Both access the same information, but through different routes, and offer different display styles.

To get the regular output format:

qstat [-u $USER] showq [-u $USER]

To get an alternate format (e.g. showing job names):

qstat -a [-u $USER] showq -n [-u $USER]

You can always use -u $USER to show only your jobs.

Getting extra information

Show the nodes where a job runs:

qstat -n [-1] jobnum

Give full information such as submit arguments and run directories, and do not wrap long lines ("-1" is the digit "one"):

qstat -f [jobnum] [-1]

To diagnose problems with "stuck" jobs, use the -s option, or simply Moab's checkjob command. The last few lines of output give past events of the job and its current status.

qstat -s jobnum checkjob -v jobnum

Peeking at job output files

Each job has associated stdin and stderr streams which are gathered on its first node and are copied back only at job end time, according to the #PBS directives -e, -o, or -j [eo|oe]. If the user does explicitly capture any output in the script (using shell redirections ">") it can be difficult to assess a job's progress.

To inspect these streams while the job is running, and also to review a job's script, use the qpeek command:

qpeek jobnum qpeek -eo jobnum qpeek -s jobnum

Read its manual page for full documentation:

qpeek --man

This command is a Carbon re-implementation of a Torque "contrib" script.

Changing jobs after submission

Use qalter to modify attributes of a job.

Only attributes specified on the command line will be changed.

If any of the specified attributes cannot be modified for any reason, none of that job's attributes will be modified.

qalter [-l resource] […] jobnumber … qalter -A accountspec jobnumber …

- Most attributes can be changed only before the job starts. Others, notably mail options and job dependencies can be meaningfully adjusted after start.

- Advanced usage

- Extending the

walltimeresource of an already running job requires Torque administrator permission. You yourself can extend prior to running, and shorten always. Warning: In either case, the newwalltimelimit will be measured from start, not as remaining time. If a new walltime limit is shorter than the elapsed time, the job will be killed right away. - You cannot modify the job script itself after submission. You will have to remove and resubmit the job.

Contact me (stern) to arrange for interventions.

Removing jobs

To retract a queued job or kill an already running job:

qdel jobnumber

Torque epilogue scripts will immediately remove remaining user processes and user files from the used compute nodes upon job termination, be it natural or after qdel.

Interactive node access

There are two ways to interactively access nodes:

- Requesting an interactive job

qsub -I [-l nodes=…]

- This is a special Torque job submission that will wait for the requested nodes to become available, and then you will be dropped into a login shell on the first core of the first node. $PBS_NODEFILE is set as in regular (non-interactive) jobs.

- Side-access a node that already runs a job, such as to debug or inspect running processes or node-local files.

- You can use

sshto interactively access any compute node on which a job of yours is running (useqsub -n jobnumto find out the node names). As soon as a node no longer runs at least one of your jobs, your ssh session to that node will be terminated. You may find the following commands helpful:

/usr/sbin/lsof -u $USER /usr/sbin/lsof -c command_name /usr/sbin/lsof -p pid

- - list open files; man page.

ps -fu $USER

- - list your own processes.

psuser

- - a Carbon-specific wrapper for ps to list user-only processes (yours and other regular users', excluding system processes).

Job notification mails

You can request a notification message to be sent to you when a job begins, ends, or aborts. The message is rather terse and looks like this:

Subject: PBS Job 281399.sched1.carboncluster PBS Job Id: 281399.sched1.carboncluster Job Name: test.job Exec host: n103/0 Execution terminated Exit_status=0 resources_used.cput=00:03:00 resources_used.mem=123kb resources_used.vmem=240kb resources_used.walltime=00:03:04

In the above, Exit_status=0 reflects the shell exit status, where 0 means success.

The following means your job was terminated because it ran beyond its allocated walltime:

Exit_status=271

- The shell exit status is this number mod 256, in this case

271 % 256 = 15, corresonding toSIGTERM.

Recording where a job ran

The [#Job notification mails] do not include $PBS_O_WORKDIR. You will have to arrange for yourself to record that information. One way to do that would be to simply record all job numbers and their working directories at the beginning of each job:

#!/bin/bash

#PBS -l nodes=…

#PBS -l walltime=…

#PBS -m bea

cd $PBS_O_WORKDIR

echo $PBS_JOBID $PWD >> $HOME/jobdirs

…

To query a job's directory, run:

grep jobnumber ~/jobdirs

Sending mail while a job is running

It may be useful to have a job automatically "phone home" sometime after it started. This can be accomplished at job submission time by arranging a mail to be sent, using a few added commands in the job script.

#!/bin/bash

#PBS …

cd $PBS_O_WORKDIR

# arrange notification mail

(

sleep 600 # time offset parameter, in seconds

tail -20 somefile.out | mailx -s "$PBS_JOBID: partial log" $USER

) &

# main work here

…

Explanation:

- The commands inside

( … ) &run in a background track aside the main work. - The first command,

sleep, delays further action (in this track only) by the specified time in seconds.- To emphasize minutes, write

sleep $(( 10 * 60 )). - To set the time relative to the walltime limit, leverage the

$PBS_WALLTIMEvariable, e.g. as insleep $(( PBS_WALLTIME - ( minutes * 60 ) )).

- To emphasize minutes, write

- The

tailcommand is a stand-in for any inspection command you might want to run. An even simpler command could bels -lt. You could also use several commands enclosed by parentheses,( cmd1; cmd2; ... ), to construct a more elaborate mail body. - The

mailxcommand, following the pipe symbol|, reads the output of your inspection command and sends you a mail with the subject specified by the-soption. - If the main part of the job finishes before the pre-mail sleep is over no mail will be sent.

Note: The Moab scheduler also supports triggers, but they run in a different environment, not on an allocated node.