HPC/Submitting and Managing Jobs: Difference between revisions

m (→Introduction) |

m (→Introduction) |

||

| Line 20: | Line 20: | ||

*: In particular, you must [[HPC/Software/Module Setup | '''configure modules and $PATH''']] in your <code>$HOME/.bashrc</code> file to help mpirun and other mechanisms locate your programs on each compute node. Otherwise, mpirun will fail, typically as soon as more than one node is involved. | *: In particular, you must [[HPC/Software/Module Setup | '''configure modules and $PATH''']] in your <code>$HOME/.bashrc</code> file to help mpirun and other mechanisms locate your programs on each compute node. Otherwise, mpirun will fail, typically as soon as more than one node is involved. | ||

*: Further, both the variable <code>$PBS_NODEFILE</code> and the file it points to are only available on the first node. | *: Further, both the variable <code>$PBS_NODEFILE</code> and the file it points to are only available on the first node. | ||

*: Environment variables can be propagated (exported) via mpirun/mpiexec, but there is no standard or quasi-standard way. | *: Environment variables can be propagated (exported) via mpirun/mpiexec, but there is no standard or quasi-standard way across MPI flavors. | ||

*:* [http://www.open-mpi.org/doc/v1.4/man1/mpirun.1.php#sect17 OpenMPI's mpirun] selectively exports some variables and supports the <code>-x</code> flag to export additional variables. | *:* [http://www.open-mpi.org/doc/v1.4/man1/mpirun.1.php#sect17 OpenMPI's mpirun] selectively exports some variables and supports the <code>-x</code> flag to export additional variables. | ||

*:* Intel's mpiexec/mpiexec.hydra offer <code>-envlist ''NAME,NAME,...''</code> instead. See Sec. 2.4 in the ''Reference Manual'' at [http://software.intel.com/en-us/articles/intel-mpi-library-documentation/]. | *:* Intel's mpiexec/mpiexec.hydra offer <code>-envlist ''NAME,NAME,...''</code> instead. See Sec. 2.4 in the ''Reference Manual'' at [http://software.intel.com/en-us/articles/intel-mpi-library-documentation/]. | ||

Revision as of 02:48, June 2, 2011

Introduction

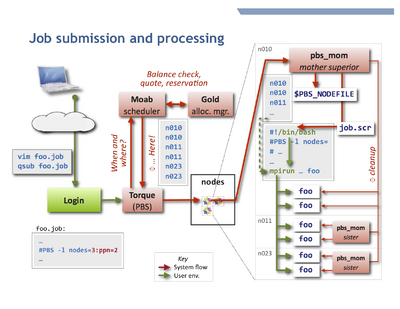

To accept and run jobs, Carbon uses the Torque Resource Manager, which is a variant of PBS. A separate software component called a scheduler chooses the nodes and the start time for jobs according to the job parameters, resource availability, and configuration. Carbon uses the Moab Workload Manager as scheduler. Further, the resources used by each job (primarily the CPU time) are managed by the Gold Allocation Manager.

The adjacent figure shows schematically how a typical job is processed. Unless otherwise told, Torque will execute the job on your behalf on the first node allocated by Moab,

in your $HOME directory, and typically using your login shell. It is the job's responsibility to:

- Change to a suitable working directory,

- Recognize and use the other nodes of the job.

- This is usually done by directing an MPI program to start on the nodes listed in the job's

$PBS_NODEFILE. - A job is perfectly free to run more than one program, such as several MPI programs in sequence.

- This is usually done by directing an MPI program to start on the nodes listed in the job's

- Set or propagate environment variables as needed.

- Environment variables usually are propagated core-to-core on the first node.

- Environment variables usually are not propagated node-to-node.

- In particular, you must configure modules and $PATH in your

$HOME/.bashrcfile to help mpirun and other mechanisms locate your programs on each compute node. Otherwise, mpirun will fail, typically as soon as more than one node is involved. - Further, both the variable

$PBS_NODEFILEand the file it points to are only available on the first node. - Environment variables can be propagated (exported) via mpirun/mpiexec, but there is no standard or quasi-standard way across MPI flavors.

- OpenMPI's mpirun selectively exports some variables and supports the

-xflag to export additional variables. - Intel's mpiexec/mpiexec.hydra offer

-envlist NAME,NAME,...instead. See Sec. 2.4 in the Reference Manual at [1].

- OpenMPI's mpirun selectively exports some variables and supports the

See also: directory layout.

Submitting and managing jobs

Job Script

A job is described to Torque by a job script, which in most cases is a shell script beginning with PBS directives which are lines ignored by the shell but interpreted by Torque.

- Example Job Script.

- Interpretation of PBS directives ends at the first executable statement in the script.

- Advanced usage

- The job script may be written in any scripting language, such as Perl or Python. The interpreter is specified in the first script line in Unix hash-bang syntax

#!/usr/bin/perl, or using the qsub -S path_list option. - The default directive token

#PBScan be changed or unset entirely with the qsub -C option; see qsub, sec. Extended Description.

Submission

To submit a job, use Torque's qsub command on a login node.

qsub [-A accountname] [options] jobfile

To review qsub operation and options, consult the following:

man qsub qsub --help

- Advanced usage

- You can submit follow-up jobs from within a parent job script while it runs on a compute node, but it may be better to leverage job dependencies.

- Requesting Resources

- Moab's msub command does not work in Carbon's current server constellation.

Queues

See Queues and Policies.

Checking job status

Query your jobs using commands similar to those on other HPC clusters. You can use either Torque commands or Moab commands. Both access the same information, but through different routes, and offer different display styles.

To get the regular output format:

qstat [-u $USER] showq [-u $USER]

To get an alternate format (e.g. showing job names):

qstat -a [-u $USER] showq -n [-u $USER]

You can always use -u $USER to show only your jobs.

Getting extra information

Show the nodes where a job runs:

qstat -n [-1] jobnum

Give full information such as submit arguments and run directories, and do not wrap long lines ("-1" is the digit "one"):

qstat -f [jobnum] [-1]

To diagnose problems with "stuck" jobs, use Moab's checkjob command. The last few lines of output give past events of the job and its current status.

checkjob -v jobnum

Peeking

To inspect the output of a running job, or to review its script file, use qpeek:

qpeek jobnum qpeek -eo jobnum qpeek -s jobnum

Read its manual page for full documentation:

qpeek --man

This command is a Carbon re-implementation of a Torque "contrib" script.

Changing jobs after submission

Use qalter to modify attributes of a job.

Only attributes specified on the command line will be changed.

If any of the specified attributes cannot be modified for any reason, none of that job's attributes will be modified.

qalter [-l resource] […] jobnumber … qalter -A accountspec jobnumber …

- Most attributes can be changed only before the job starts. Others, notably mail options and job dependencies can be meaningfully adjusted after start.

- Advanced usage

- Extending the

walltimeresource of an already running job requires Torque administrator permission. You yourself can extend prior to running, and shorten always. Warning: In either case, the newwalltimelimit will be measured from start, not as remaining time. If a new walltime limit is shorter than the elapsed time, the job will be killed right away. - You cannot modify the job script itself after submission. You will have to remove and resubmit the job.

Contact me (stern) to arrange for these interventions.

Removing jobs

To retract a queued job or kill an already running job:

qdel jobnumber

Torque epilogue scripts will immediately remove remaining user processes and user files from the used compute nodes upon job termination, be it natural or after qdel.

Interactive node access

There are two ways to interactively access nodes:

- Requesting an interactive job

qsub -I [-l nodes=…]

- This is a special Torque job submission that will wait for the requested nodes to become available, and then you will be dropped into a login shell on the first core of the first node. $PBS_NODEFILE is set as in regular (non-interactive) jobs.

- Side-access a node that already runs a job, such as to debug or inspect running processes or node-local files.

- You can use

sshto interactively access any compute node on which a job of yours is running (useqsub -n jobnumto find out the node names). As soon as a node no longer runs at least one of your jobs, your ssh session to that node will be terminated. You may find the following commands helpful:

/usr/sbin/lsof -u $USER /usr/sbin/lsof -c command_name /usr/sbin/lsof -p pid

- - list open files; man page.

ps -fu $USER

- - list your own processes.

psuser

- - a Carbon-specific wrapper for ps to list user-only processes (yours and other regular users', excluding system processes).