HPC/Submitting and Managing Jobs: Difference between revisions

m (moved HPC/Submitting Jobs to HPC/Submitting and Managing Jobs) |

|||

| Line 4: | Line 4: | ||

See also: '''[[HPC/Directories|directory configuration]]'''. | See also: '''[[HPC/Directories|directory configuration]]'''. | ||

__TOC__ | |||

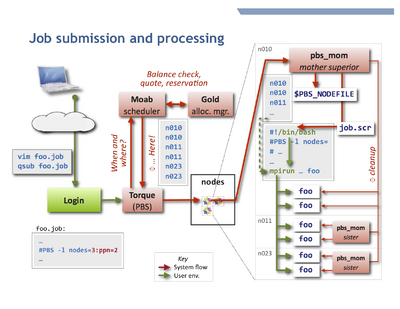

[[Image:HPC job flow.png|right|400px|thumb|Processing flow of a job on Carbon.]] | |||

== Introduction == | |||

To accept and run jobs, Carbon uses the [http://en.wikipedia.org/wiki/TORQUE_Resource_Manager Torque Resource Manager], | |||

which is a variant of [http://en.wikipedia.org/wiki/Portable_Batch_System PBS]. | |||

The determination of nodes and start time for jobs is performed by a separate software component called a ''scheduler'', | |||

which for Carbon is the [http://www.adaptivecomputing.com/resources/docs/mwm/index.php Moab Workload Manager]. | |||

Further, the resources used by each job (primarily the CPU time) are managed by the | |||

[http://www.adaptivecomputing.com/resources/docs/gold/index.php Gold Allocation Manager]. | |||

The adjacent figure shows schematically how a job is processed. | |||

== Submitting and managing jobs == | == Submitting and managing jobs == | ||

qsub [-A accountname] [options] ''jobfile'' | qsub [-A accountname] [options] ''jobfile'' | ||

For details on options: | For details on options: | ||

Revision as of 23:29, May 23, 2011

User environment

We use the environment-modules package to manage user applications. Learn more at HPC/Software/Environment.

See also: directory configuration.

Introduction

To accept and run jobs, Carbon uses the Torque Resource Manager, which is a variant of PBS. The determination of nodes and start time for jobs is performed by a separate software component called a scheduler, which for Carbon is the Moab Workload Manager.

Further, the resources used by each job (primarily the CPU time) are managed by the Gold Allocation Manager.

The adjacent figure shows schematically how a job is processed.

Submitting and managing jobs

qsub [-A accountname] [options] jobfile

For details on options:

man qsub qsub --help

More details are at the Torque Manual, in particular the qsub man page.

The single main queue is batch and need not be specified. All job routing decisions are handled by the scheduler. In particular, short jobs are accommodated by a daily reserved node and by backfill scheduling, i.e. "waving forward" while a big job waits for full resources to become available.

Using the debug queue

For testing job processing and your job environment, use qsub -q debug on the command line, or the follwing in a job script:

#PBS -q debug

The debug queue accepts jobs under the following conditions

nodes ≤ 2 ppn ≤ 4 walltime ≤ 1:00:00

Checking job status

Query your jobs using commands similar to those on other HPC clusters. You can use either PBS/Torque commands or Moab commands. Both access the same information, but through different routes, and offer different display styles.

To get the regular output format:

qstat [-u $USER] showq [-u $USER]

To get an alternate format (e.g. showing job names):

qstat -a [-u $USER] showq -n [-u $USER]

You can always use -u $USER to show only your jobs.

Getting extra information

Show the nodes where a job runs:

qstat -n [-1] jobnum

Give full information such as submit arguments and run directories, and do not wrap long lines ("-1" is the digit "one"):

qstat -f [jobnum] [-1]

To diagnose problems with "stuck" jobs, use Moab's checkjob command. The last few lines of output give past events of the job and its current status.

checkjob -v jobnum

Changing jobs after submission

You can use qalter to modify the attributes of a job before it starts.

Specify the attributes to be modified on the command line. All others will not be changed.

If any of the specified attributes cannot be modified for any reason, none of that job's attributes will be modified.

qalter [-l resource] […] jobnumber … qalter -A accountspec jobnumber …

You cannot modify the job script itself after submission. You will have to remove and resubmit the job. For exceptional situations, contact me (stern) to arrange for a manual intervention.

Removing jobs

To retract a queued job or terminate an already running job:

qdel jobnumber

Example job file

Here is a sample job script for an MPI application in the default user environment (OpenMPI over Infiniband interconnect):

#!/bin/bash

## Basics: Number of nodes, processors per node (ppn), and walltime (hhh:mm:ss)

#PBS -l nodes=5:ppn=8

#PBS -l walltime=0:10:00

#PBS -N job_name

#PBS -A account

## File names for stdout and stderr. If not set here, the defaults

## are <JOBNAME>.o<JOBNUM> and <JOBNAME>.e<JOBNUM>

#PBS -o job.out

#PBS -e job.err

## send mail at begin, end, abort, or never (b, e, a, n)

#PBS -m ea

# change into the directory where qsub will be executed

cd $PBS_O_WORKDIR

# count allocated cores

nprocs=$(wc -l < $PBS_NODEFILE)

# start MPI job over default interconnect

mpirun -machinefile $PBS_NODEFILE -np $nprocs \

programname

- If your program reads from files or takes options and/or arguments, use and adjust one of the following forms:

mpirun -machinefile $PBS_NODEFILE -np $nprocs \

programname < run.in

mpirun -machinefile $PBS_NODEFILE -np $nprocs \

programname -options arguments < run.in

mpirun -machinefile $PBS_NODEFILE -np $nprocs \

programname < run.in > run.out 2> run.err

- In the last form, anything after

programnameis optional. If you use specific redirections for stdout or stderr as shown (>, 2>), the job-global filesjob.out, job.errdeclared earlier will remain empty or only contain output from your shell startup files (which should really be silent), and the rest of your job script.

- Infiniband (OpenIB) is the default (and fast) interconnect mechanism for MPI jobs. This is configured through the environment variable

$OMPI_MCA_btl. - To select ethernet transport (e.g. for embarrasingly parallel jobs), specify an

-mcaoption:

mpirun -machinefile $PBS_NODEFILE -np $NPROCS \

-mca btl self,tcp \

programname

The account parameter

The parameter for option -A account is in most cases the CNM proposal, specified as follows:

cnm123- (3 digits) for proposals below 1000

cnm01234- (5 digits, 0-padded) for proposals from 1000 onwards.

user- (the actual string "user", not your user name) for a limited personal startup allocation

staff- for discretionary access by staff.

You can check your account balance in hours as follows:

mybalance -h

gbalance -u $USER -h

Advanced node selection

You can refine the node selection (normally done via the PBS resource -l nodes=…) to

finely control your node and core allocations. You may need to do so for the following reasons:

- select specific node hardware generations,

- permit shared vs. exclusive node access,

- vary PPN across the nodes of a job,

- accommodate multithreading (OpenMP).

See HPC/Submitting Jobs/Advanced node selection for these topics.

Interactive node access

There are two ways to interactively access nodes:

- Requesting an interactive job

qsub -I [-l nodes=…]

- This is a special Torque job submission that will wait for the requested nodes to become available, and then you will be dropped into a login shell on the first core of the first node. $PBS_NODEFILE is set as in regular (non-interactive) jobs.

- Side-access a node that already runs a job, such as to debug or inspect running processes or node-local files.

- You can use

sshto interactively access any compute node on which a job of yours is running (useqsub -n jobnumto find out the node names). As soon as a node no longer runs at least one of your jobs, your ssh session to that node will be terminated. You may find the following commands helpful:

/usr/sbin/lsof -u $USER /usr/sbin/lsof -c command_name /usr/sbin/lsof -p pid

- - list open files; man page.

ps -fu $USER

- - list your own processes.

psuser

- - a Carbon-specific wrapper for ps to list user-only processes (yours and other regular users', excluding system processes).